Regular readers of this blog will be well aware that the polls have been consistently disagreeing with each other in their estimates of how many people propose to vote Yes and No. Three companies, ICM, Panelbase and Survation have tended to produce higher estimates of the Yes vote (and thus lower estimates of the No vote) than Ipsos MORI, TNS BRB or YouGov.

It was perhaps inevitable that at some point someone would light the blue touch paper and start a debate about which pollsters were right and which wrong. In the end the matchbox was seized by Peter Kellner of YouGov, who in a blog posted on his company’s website a fortnight ago argued that, ‘A number of recent polls have produced widely-reported stories that the contest is close. They are wrong. It isn’t.’

Kellner took particular aim at the polls that have been conducted by Survation. He queried why in Survation’s polls far more people say they voted SNP in the 2010 Westminster election than actually did so. At the same time, he noted that when in June Survation asked people how they had voted in the European elections the previous month, as many as 39% of those who cast a ballot said that they voted for the SNP; in contrast when YouGov themselves conducted a similar exercise they replicated the actual SNP tally of 29% exactly. Between them, Kellner argued, these patterns were evidence of apparent pro-SNP bias in Survation’s samples. He went on to suggest that a likely reason for this bias is that Survation’s samples (and by implication those of the other companies that secure a relatively high Yes vote too) contained too many ‘passionate Nats’, that is long-standing supporters of the party, and not enough ‘passing Nats’, that is people who voted for the party for first time in 2011. The latter he surmised were less likely to back independence than the former.

There is a back-story to Kellner’s criticism. All of the pollsters, apart from Ipsos MORI, weight their samples so that how people say they voted in the 2011 election more or less matches the actual distribution of the vote in 2011. But to do this most pollsters have to ask their respondents afresh on each survey what they did in 2011. However, because they maintain their own panel of potential respondents, YouGov were able to ask their panel members shortly after the 2011 election how they had just voted. Thus for those people who were a YouGov panel member in 2011 at least (and of course not all current panel members will have been), the company do not have to ask their respondents what they did three years ago, because the information has already been collected.

This procedure has two apparent potential advantages. First it means that YouGov are less reliant on people having to remember accurately what they did as long as three years ago. Second, it has enabled the company uniquely to include in its past vote weighting procedure a separate category for people who voted for the SNP in 2011 but backed Labour at the previous election in 2010 (in effect ‘passing Nats’). In other words, Kellner’s criticism draws heavily on where it might be thought that YouGov have a comparative advantage over their rivals.

Survation themselves made three main points in response. First, although more voters say they voted SNP at the Westminster election than actually did so (something that ICM and Panelbase had both independently discovered as long ago as September of last year), the pattern of reported voting in 2011 in Survation’s polls is typically close to the actual result. So people do seem able to remember reasonably accurately what they did on that occasion at least. Consequently – and crucially – weighting by 2011 reported vote usually makes little if any difference to Survation’s estimate of the level of Yes and No support. Second, Survation noted that a poll of European voting intentions that YouGov conducted in April (some weeks, it should be said before polling day) overestimated Labour’s eventual tally by five points, whereas both Survatiion’s and ICM’s polls were very close to the actual outcome. Thirdly, they observed that when YouGov asked their respondents how they had voted in 2011, no less than 67% said that they did, compared with an official turnout of 33.5%. In other words, Survation implied, when it comes to the evidence of the European elections some awkward questions could be asked about the representativeness of YouGov’s samples too.

What should we make of this dispute? Perhaps the first point to make is that Kellner is quite right to point out that YouGov’s polls appear to have fewer ‘passionate Nats’ in that they consistently uncover a higher proportion of 2011 SNP voters who say they intend to vote No. On average in their last four polls YouGov have reported that, after Don’t Knows have been excluded, as many as 26% of those who voted for the SNP say they will vote No to independence. In contrast the equivalent figure for Survation is 21%, for ICM 17%, and for Panelbase 16%. So at first glance Kellner would appear to have identified a crucial reason why YouGov’s figures are different (albeit one on which Survation is the closest of the three to YouGov’s own figures).

How far this difference arises because YouGov weight separately those who say they voted Labour in 2010 but SNP in 2011 is, however, impossible to tell from publicly available information. What, though, can be noted, is that sometimes this group is being upweighted quite considerably in YouGov’s polls – in some instances by almost a factor of two – so potentially quite a lot of influence is being given to a relatively small group of respondents.

But in any event what we should also note is that YouGov’s polls are not just different from those of Survation, ICM and Panelbase in tending to contain a higher proportion of 2011 SNP voters who say they will vote No. They also contain a rather lower proportion of 2011 Labour voters who state that they will vote Yes. In YouGov’s four most recent polls that figure has averaged 21%, whereas Survation put it at 30% and both ICM and Panelbase at 27%. In other words, the difference between YouGov and their rivals in their estimate of Yes strength is not confined to the reported intentions of those who voted SNP in 2011, but is also evident amongst those who said they backed Labour. That suggests that the difference between the pollsters is more than just a question of whether they have too many or too few ‘passionate’ and ‘passing’ nationalists.

Indeed, it seems to have very little to do with questions of weighting at all, whether by past vote or by any other consideration. The truth is, as I demonstrated in a recent number of the academic journal Scottish Affairs, all of the pollsters tend to face similar difficulties in securing representative samples of the Scottish electorate; most polls tend to interview too few men, younger people and those in the working class ‘C2DE’ social grades, all of which are groups where Yes support is typically rather higher. Consequently, in most polls the effect of weighting is to increase the Yes share of the vote by a couple of points or so. Although exceptionally their polls are typically not short of men, in this YouGov are not otherwise very different from anyone else.

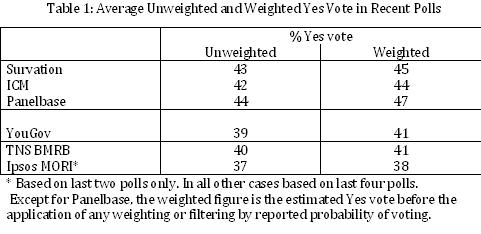

The pattern can be seen in the following table, which shows the average weighted and unweighted figures in the last four polls to be conducted by each of the six companies that have been polling regularly during the referendum.

And more importantly, one other crucial point emerges from the table. The difference between the polls in their estimate of the Yes vote is evident in the figures that they obtain before any weighting or filtering is applied. In other words, Survation together with ICM and Panelbase are simply finding more Yes voters than are their rivals in the first place. The answer to the question, ‘Why are the polls different?’ lies in the fact that they are obtaining different samples – but why that is the case remains, alas, a mystery.

About the author

John Curtice is Professor of Politics at Strathclyde University, Senior Research Fellow at ScotCen and at 'UK in a Changing Europe', and Chief Commentator on the What Scotland Thinks website.